Avoid these big-data mistakes and drive insight through effective analytics

We sit on a significant amount of data that we struggle to leverage effectively. The challenges we face are plenty – from not having simplified and automated access to the data, to not being able to find the data in a timely manner (sometimes not at all), to making incorrect assumptions about the data, to investing in (the wrong) technology without having a clearly charted purpose, to progress that is not as fast as anticipated.

The last three years have seen a surge in big-data initiatives at financial institutions followed by a plateauing/fizzling out of potentially valuable outcomes. A few financial institutions are doing really well with these analytics initiatives, but these are only a handful. After a careful assessment of over 300 big-data projects at financial institutions, I would humbly like to suggest some prescriptive ideas.

Charting a Clearly Defined Data Strategy

- Identify sources of data – internal and external

- Think about questions you will ask of the potentially assimilated data – what is it you want to do

- Evaluate the value of answering the each of the questions (ROI)

- Prioritize a few mini-projects, execute a pilot, and keep improving

- Plan to scale, identify solution providers/partner who can help you with this journey

Most financial institutions talk about the first four bullets but start with number five. This approach quickly turns the project upside down as the solution provider/partner leading the project brings a significant amount of bias to the problem that needs to be solved.

Measuring the ROI

- Will this help you drive more engagement with consumers?

- Will you be able to reduce the cost of operations?

- Will you be able to drive incremental, attributable revenue?

- Will this help you become more efficient?

- Will your big-data initiative help you with compliance?

Every one of the above can be applied to the initiatives that you choose. Some can be applied to more than one initiative. For example, better engagement could mean lower churn and the potential adoption of additional products and services by consumers. Compliance, efficiency, and lower cost could also apply to a single initiative. Apply these metrics to each of your potential questions/projects to help you decide how to prioritize your potential projects. Also, compare the degree of difficulty to implement each potential project so you can weigh effort versus return.

Three Big Roadblocks

- Process – not having a clear roadmap, and the potential of enterprise-wide disruption

- Technology – siloed data, poor quality data, inability to collectively view data

- Personnel – Lack of data analytics expertise and a data driven culture

Big-Data Analytics Maturity (step-by-step expectations)

To keep things simple, I will break this down into three stages of maturity.

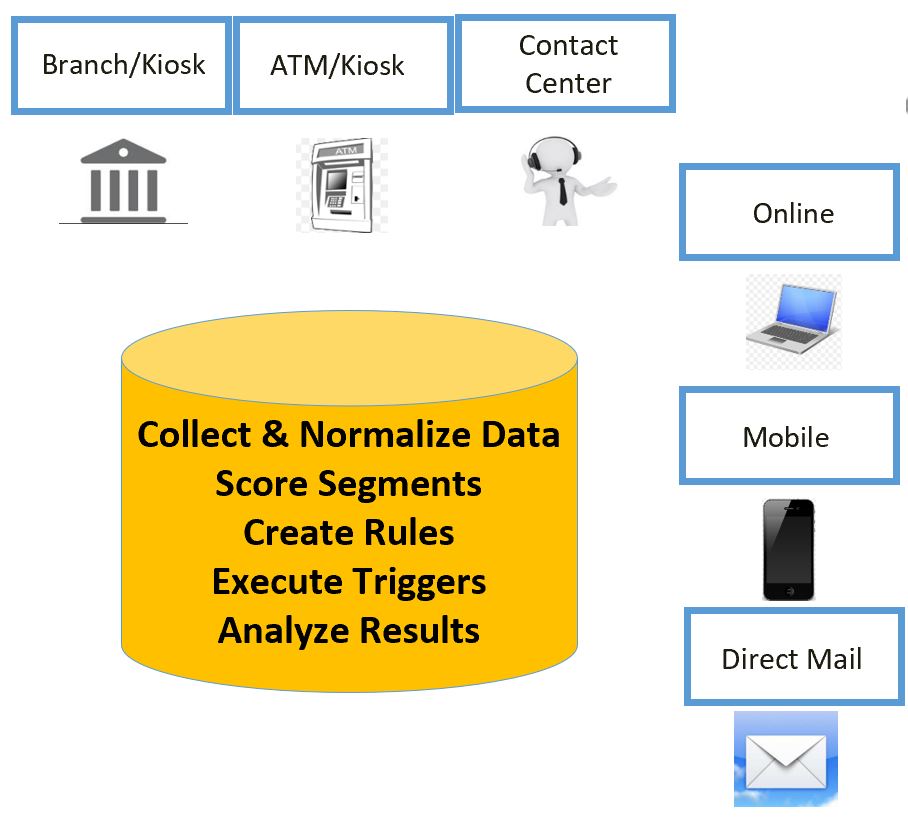

- Collecting, validating, and consolidating data/information

- Visualization of data/information, reporting, interactive analytics

- Predictive analytics, artificial intelligence, and automation

None of the 300+ financial institutions are focused on the most important aspect of having automated access to data – stage three. This is true for three reasons – it takes too long for the data to come together, they simply do not have enough data to be statistically relevant in the prediction process, and the programming language being used is not capable of predictive analytics.

Some Lame Excuses

I list five quotes from five leaders about their big-data initiatives and sincerely hope that you too find the irony in their statements. I have added my own commentary to each statement.

- “I have the tallest building in town. I don’t need data to tell me who is coming to my financial institution.” Complete arrogance.

- “I gave away all my historical data to a printing company. They have given me a great rate on printing for the next five years. That to me is great ROI.” Ethics? Privacy? Selling your most profitable asset?

- “I cannot afford to pay for an expensive database programmer.” Complete myopia.

- “Just two more years, and it is someone else’s problem (about to retire).” Absolution of responsibility.

- “I already have all the data I need.” And yet, they cannot execute simple transactions.

One executive even told me that they were doing predictive churn analytics with a partner. I quickly learned that the partner was predicting churn with under 10,000 transactional records. A successful financial institution I know predicts churn with 88% confidence with over 1 million transactional records. If your provider/partner tells you they can predict without scale you are better off hiring a fortune teller.

Doing it Correctly

Building a foundational big-data strategy is important. Think about the following factors – data audit, data assimilation, data cleansing, automation, data governance, quality control, data sharing. You need a proper process that begins with you knowing what problem you want to try to solve for.

Move beyond the fundamentals of reporting. Big-data analytics should not be the glorification of reporting. You need a complete picture of what is going on. Use this process to cleanse your financial institutions reporting process and reports. Minimize reporting, and make it actionable.

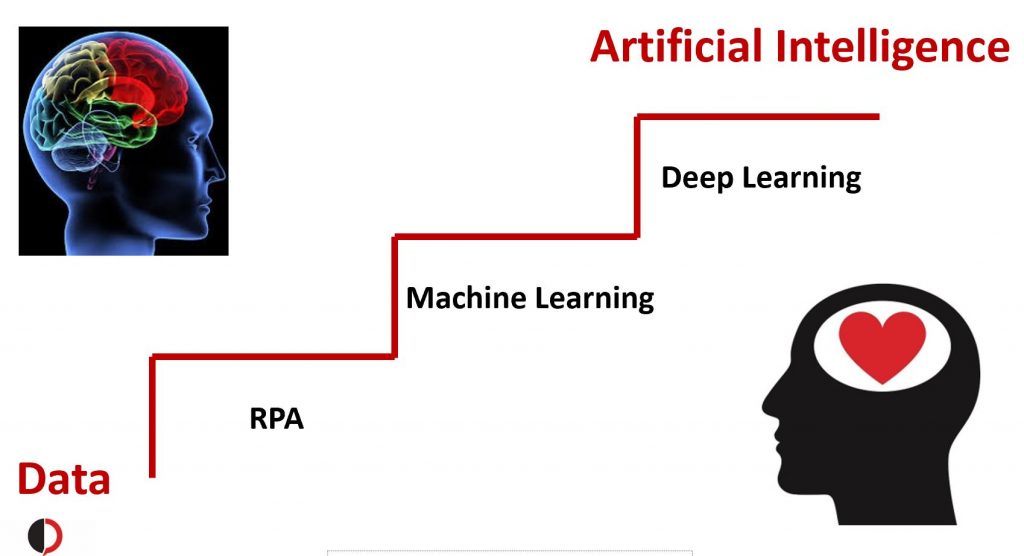

Think about the application of artificial intelligence so you can truly transform your financial institution and the existing roles of team members. AI exists to automate low value tasks that keep people away from more creative and valuable tasks.

Use these guidelines to choose a partner for your big-data initiative. Many providers (including financial institutions) consider RPA based tasks (robotic process automation) as AI. Our industry is beginning to see the demise of some of these providers. Please be aware.

Meet the Experts

I have invited five big-data experts that I sincerely respect and admire to participate in a brainstorm with me on what it takes to drive success. We are hosting this discussion on September 10, 2019. If you would like to join the discussion or even have questions for my panel – Sundeep@DigitalCredence.com.